Anatomy of an AI Agent

If you're going to give an AI agent real work to do, it's worth understanding what's inside one.

Because agents aren't just chatbots with extra steps — they're systems with components. And the more sophisticated the agent, the more those components start to look like the things that make a human coworker effective: memory, judgment, personality, the ability to learn new skills, the discipline to pick up a task without being asked. The architecture of an autonomous agent is, increasingly, an attempt to recreate the architecture of a person.

This post walks through what's actually inside one. Not how to build it — there are a thousand technical guides for that. This is the conceptual map: what the moving parts are, how they fit together, and what's missing from most of the things being sold as "agents" right now.

A quick definition before we start, in case you skipped my earlier post on agentic AI: an agent, in modern terms, is a system that uses an LLM to make decisions and take actions on its own, often using tools (a browser, your email, your calendar) to get something done. Everything in this post is a variation on that.

Start with what already exists

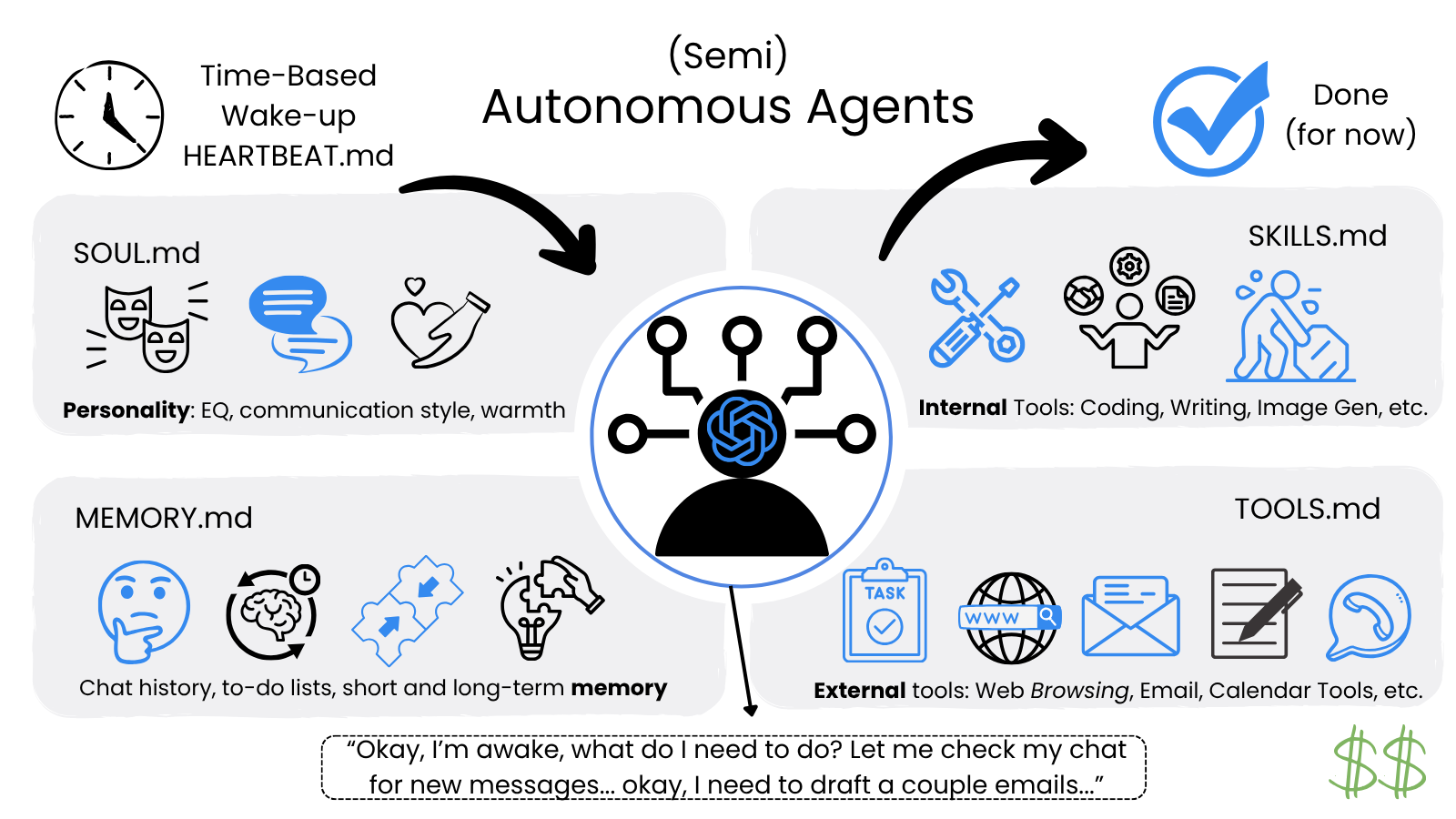

The cleanest way to understand agent architecture is to look at one that actually works. As of 2026, the closest thing we have to a real autonomous agent is OpenClaw — an open-source project by Austrian developer Peter Steinberger that hit 100,000 GitHub stars in roughly a week after its release in late 2025.

OpenClaw isn't perfect (it's not really for the average user yet, and it's expensive to run). But it caught on because it nailed six things that most "agents" don't have. Those six things are a useful starting point for the rest of this post.

1. Long-term memory. OpenClaw writes daily logs and curated facts to disk in a file called MEMORY.md. This is the simplest and most important upgrade over a standard chatbot — most chatbots forget everything the moment a conversation ends. OpenClaw remembers your preferences, your past decisions, what worked, and what didn't. Across weeks. Across months. That's what gives it any sense of continuity.

2. Personality and principles. A file called SOUL.md defines the agent's "vibe" — its core values, its non-negotiables, how it behaves under pressure. This sounds soft, but it's actually load-bearing. Without it, the agent's behavior drifts based on the prompt of the moment. With it, the agent stays consistent: protective of your time, curt and challenging when you ask for feedback, friendly when you ask for help. It's the agent's character.

3. Machine-level access. OpenClaw doesn't run in a sandbox. It runs locally on your computer with direct access to your shell, your file system, and your browser, defined in TOOLS.md. It can execute terminal commands, edit local files, navigate websites like a human, and interact with any application you have installed. This is what gives it the ability to actually do things, not just talk about them. It's also what makes it a security concern — but more on that in a minute.

4. Proactive autonomy. This is the most interesting one. A file called HEARTBEAT.md gives OpenClaw a rhythm. Every 30 minutes, the agent wakes itself up, checks a to-do list, and decides whether there's work to do. It can finish a task while you sleep. It can notice something in your email and message you about it before you've thought to ask. Most "agents" only act when prompted. This one acts on its own.

5. Multi-channel presence. OpenClaw doesn't live in a single chat window. It connects to whatever messaging apps you already use — WhatsApp, Slack, Telegram, iMessage — and treats them all as the same conversation. You can start a task in Slack and check on it from WhatsApp, and the agent maintains a single persistent session across all of them. One brain. Many surfaces.

6. Self-improving capability. The most ambitious of the six. If OpenClaw encounters a task it can't do, it can browse documentation, write a script to handle it, and save that script to a skills/ folder for future use. It writes its own tools. Slowly, the more you use it, the more it can do. That's what SKILLS.md tracks — a growing library of capabilities the agent has taught itself.

Six files. Six ideas. That's the skeleton of every meaningful autonomous agent today.

Why these six matter

Here's the thing about that list: every one of those six components maps to something a human coworker has. Memory (the ability to remember). Personality (the ability to behave consistently). Tools (the ability to do things). A schedule (the ability to start work on your own). Multi-channel presence (the ability to be reachable). Self-improvement (the ability to learn).

Take any of them away, and the system stops feeling like a coworker.

A chatbot has tools, sometimes. But it doesn't have memory or personality or autonomy or self-improvement. It's a smart vending machine. Useful, but not a teammate.

A scheduled automation (n8n, Zapier with AI steps) has tools and a schedule. But it has no memory, no personality, no judgment beyond what you wired in. It's a vending machine on a timer.

A reactive AI assistant (ChatGPT with browsing, Claude with MCP) has tools and increasingly has memory. But it doesn't have a heartbeat — it only acts when you prompt it. So it's not really a coworker yet either. It's an extremely capable employee who only works when watched.

OpenClaw is the first widely-used system to put all six together. That's why it caught on. Not because the underlying AI is smarter — it uses the same models everyone else does — but because the architecture finally matches what an autonomous agent needs to be.

What's still missing

But OpenClaw is the start, not the end. There's a lot of room for improvement. Here's what real autonomy is going to require:

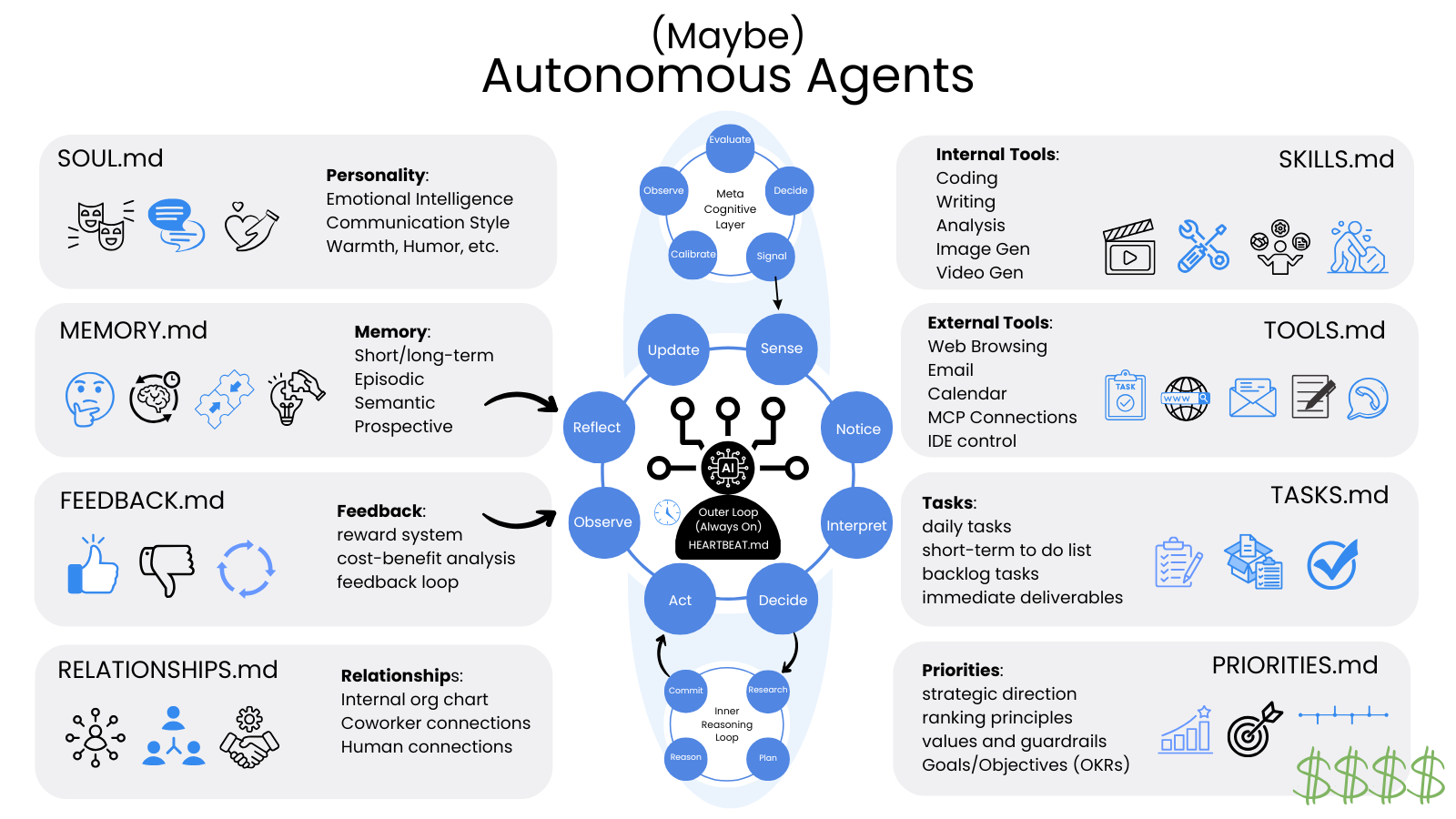

Memory needs to become layered — and self-cleaning. Right now, MEMORY.md is one bucket — a flat file of facts and logs. Real autonomous agents will need episodic memory (what happened last time, what you said in a specific conversation, what worked), semantic memory (general knowledge about your business, your industry, your relationships), prospective memory (things to do in the future, contingent on triggers), and meta-memory (knowing what it knows and what it doesn't, so it knows when to ask).

They'll also need to manage their memory the way humans do. Anthropic recently shipped a feature in Claude Code called AutoDream that runs a background process during idle periods — a sort of digital sleep cycle — to consolidate, prune, and reorganize memory files. The name is deliberate: it's modeled after how human memory consolidates during REM sleep. Without something like this, an agent's memory bloats over time with stale debugging notes, contradictory entries, and references to files that no longer exist. The agent slowly gets dumber the longer you use it. AutoDream — and the OpenClaw equivalent already published on GitHub — is the first real attempt to solve that. Expect every serious agent to grow some version of this within the next year.

Human memory works this way. Agent memory mostly doesn't, yet — but the gap is closing.

The 30-minute heartbeat becomes a continuous cognitive loop. Right now, OpenClaw "wakes up," checks a list, and goes back to sleep. Real autonomous agents will run a continuous loop: observe → sense → interpret → decide → act → reflect → update → repeat. This isn't just a faster heartbeat. It's a fundamentally different way of operating — closer to how a person actually moves through their day, constantly noticing things, deciding what matters, adjusting course based on results.

Personality becomes self-awareness. Today's SOUL.md captures the agent's "vibe" and core principles. Real autonomous agents will need more — communication style, risk tolerance, EQ, warmth, a sense of humor. Think TARS from Interstellar: not just a personality, but one that adapts to context. SOUL.md splits into a richer set of files: SELF.md (an honest assessment of strengths and weaknesses), RELATIONSHIPS.md (a map of who the agent works with and how to communicate with each person), PRIORITIES.md (what matters when goals conflict).

Tools and skills keep expanding. Internal capabilities (writing, analysis, image and video generation), external integrations (web browsing, email, calendar, MCP connections, IDE control), and a self-written skills library that grows as the agent encounters new problems. The boundary between "what the agent can do today" and "what it can do tomorrow" becomes blurry, because the agent is actively expanding its own capabilities.

Multi-agent coordination becomes a thing. A single agent has limits. Real productivity comes when you can have several specialized agents — one for sales, one for research, one for scheduling — that talk to each other, hand off work, and resolve conflicts. The hard parts aren't just technical: how do agents authenticate to each other? Negotiate priorities? Avoid getting stuck in loops? Most agent products today are solo systems. The future is teams.

None of this is fully built yet. OpenClaw nailed six primitives, and the rest is roadmap. But the trajectory is clear, and every one of these capabilities already exists somewhere — in research papers, in prototypes, in someone's GitHub repo. Stitching them into something reliable, affordable, and safe is the work of the next few years.

Why this matters

If you've made it this far, you might be wondering why any of this is worth knowing.

The honest answer is: because the next few years of AI productivity gains will run through this architecture, and most of the noise you're hearing about agents right now is selling you a system with two or three of the six pieces and calling it complete. Knowing what an actual autonomous agent looks like — what's in it, what's missing from it — is the difference between recognizing a real product and falling for a marketing pitch.

It's also, if I'm being honest, just interesting. Watching a field figure out, in real time, that the right architecture for a "thinking machine" looks an awful lot like the architecture of a person — that's a story worth understanding even if you never deploy an agent yourself. We're learning what makes a coworker by trying to build one. The blueprint is becoming legible.

OpenClaw isn't the final form. Whatever replaces it in 2027 or 2028 will look different, do more, cost less, and feel more natural. But the bones will be the same. Memory. Personality. Tools. A heartbeat. Multi-channel presence. Self-improvement. Identity.

That's what's inside an agent. That's what to look for. That's where this is going.

For the broader landscape — where agents came from, where they're going, and the governance and cost questions slowing real-world adoption — see Agentic AI: Where We Are and Where We're Going.