Agentic AI: Where We Are and Where We're Going

The term "agent" gets thrown around a lot, but much like "AI" has meant many different things over the years, "agent" — and "agentic AI" — has evolved. In this post I'll walk through the history of agents, where we're at today, and where we're going.

From Agents to Agentic to Autonomous Agentic Agents

The word "agent" isn't new in AI. The foundational textbook in the field — Russell and Norvig's Artificial Intelligence: A Modern Approach — has been organized around the concept of "intelligent agents" since 1995. So when someone tells you AI agents are brand new, they're right and wrong at the same time. The idea is decades old. What's new is that we finally have models good enough to make the idea actually work.

So what is an AI agent? At its simplest: an AI agent is a system that uses an LLM to make decisions and take actions on its own, often using tools (like a web browser, your email, or your calendar) to get something done. Everything else — and there's a lot — is variation on that.

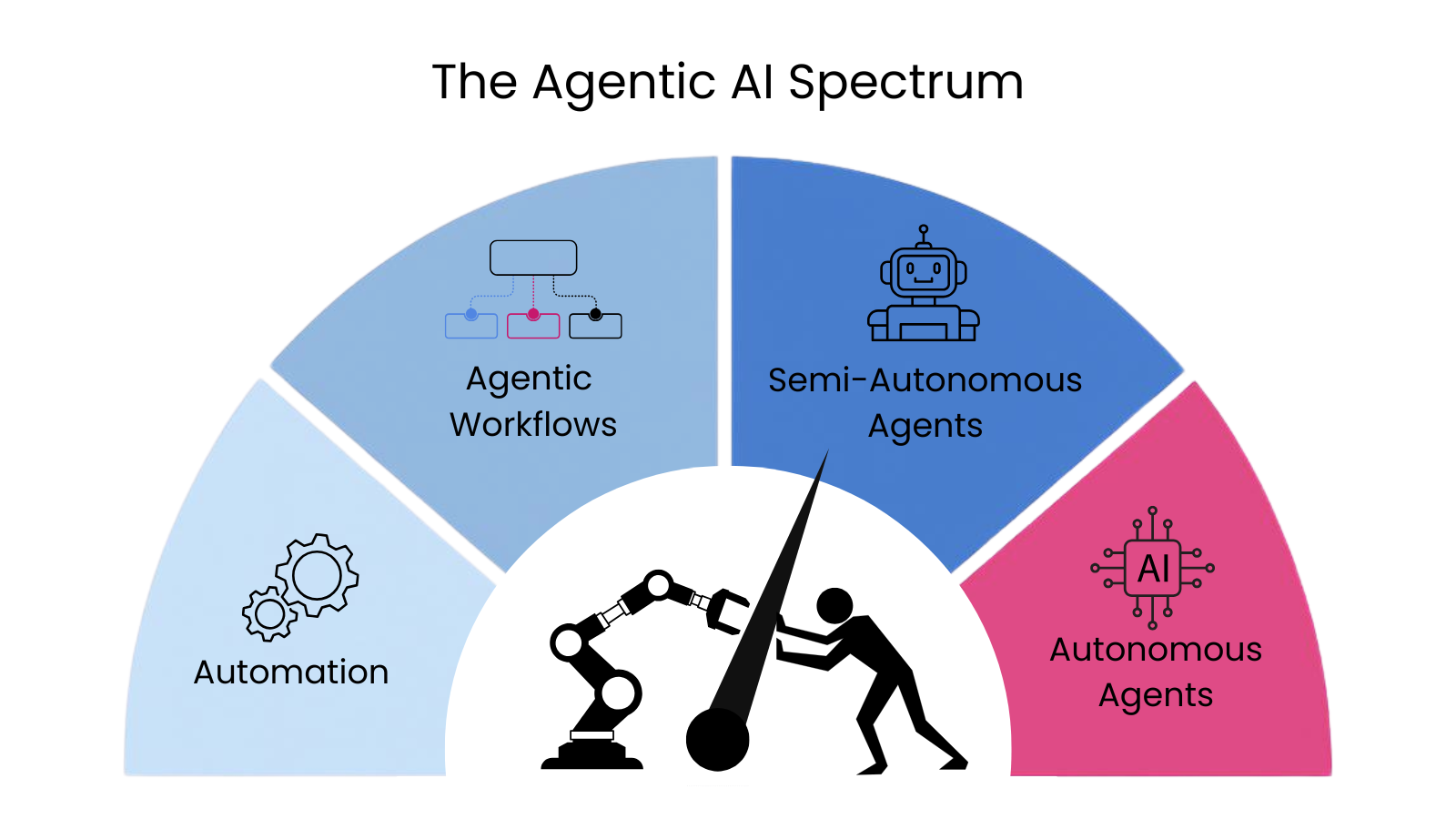

For a long time, though, the field couldn't agree on what counted as a "real" agent. Arguments raged. Every product was an "AI agent." Every developer had a different definition. Then in mid-2024, Andrew Ng — one of the most respected figures in AI — proposed a reframe: stop debating whether something is or isn't an agent, and start thinking about "agentic" as a spectrum. Some workflows are barely agentic (predefined steps with one LLM decision in the middle). Others are highly agentic (the AI plans, executes, adapts on its own). It's not a binary. It's a dial.

That's the framing I'll use here, walking through major moments that moved the dial, where we're at today, and where we're going.

March 2023 — AutoGPT. The modern explosion really started in March 2023, with a project called AutoGPT, released by a developer named Toran Bruce Richards. It was the first viral "give an AI a goal and watch it run" demo. Type in something like "research the best electric cars under $40k and write me a summary," and it would chain together searches, browsing, and summaries on its own. It became the fastest-growing project in GitHub's history at the time. It also mostly didn't work — it got stuck in loops, hallucinated constantly, and burned through OpenAI credits accomplishing very little. But it set the stage, and the race was on.

Late 2023 — agents in low-code tools. After AutoGPT, we started seeing "agents" show up in low-code/no-code platforms like n8n and Make.com. This was the first real attempt to add intelligence and autonomy to traditional deterministic workflows.

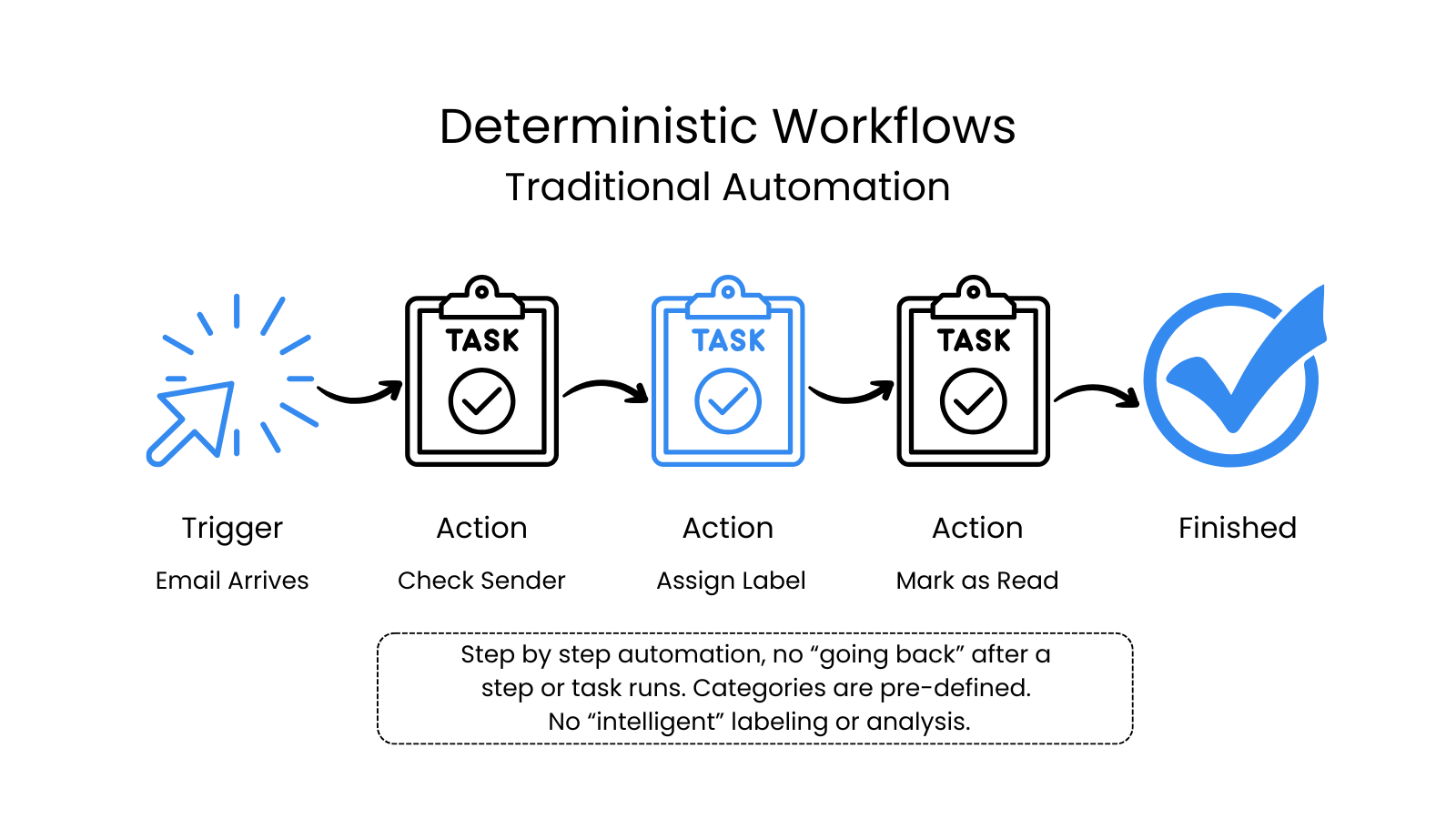

As a quick primer: deterministic workflows are predictable, trigger-based actions. If this, then that. A step-by-step process with no going back.

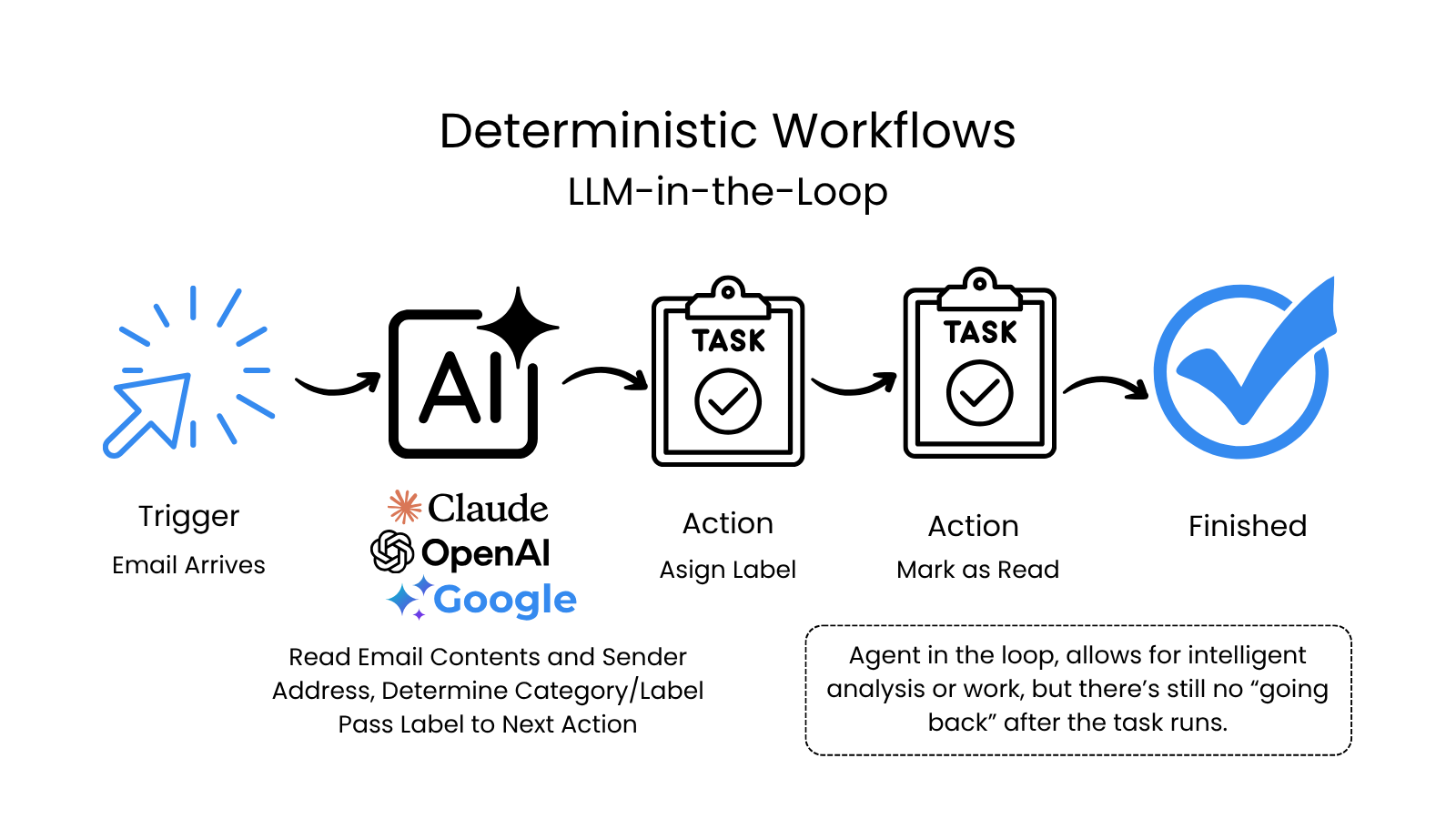

When ChatGPT came out, automation platforms were quick to integrate LLM intelligence into their workflow patterns, and we got slightly more intelligent workflows.

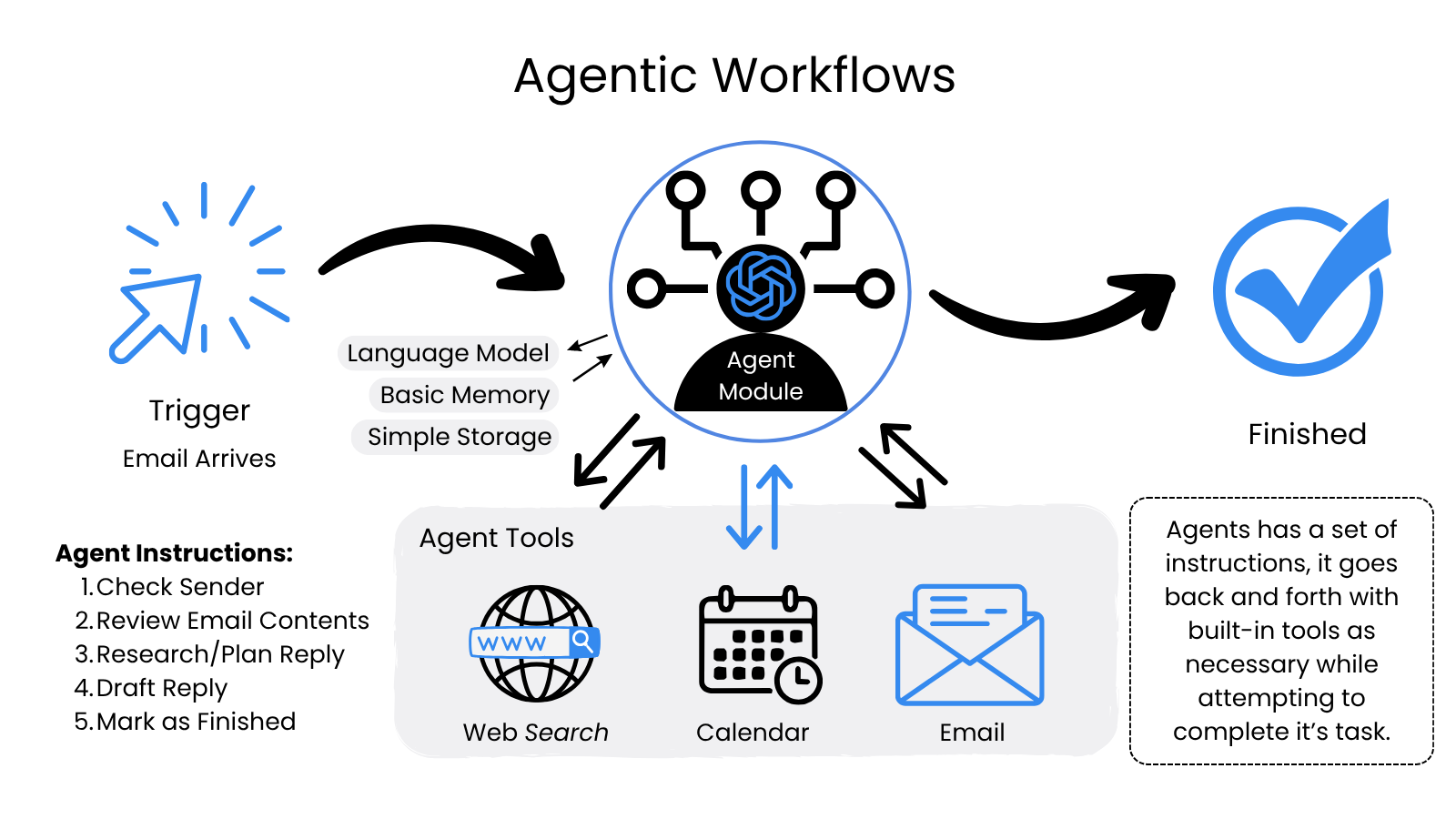

A short time later, n8n (and other automation platforms) started offering agent modules; basically an LLM with tools attached.

The benefit of adding an LLM agent module was flexibility — a higher degree of filtering and context understanding. The agent had a language model, basic memory, and simple storage, and it decided what tools to call instead of following a fixed sequence. But it was still fragile, and often created more problems than it solved. These early systems suffered the same issues as AutoGPT: hallucinations, memory drops, infinite loops.

These were billed at the time as intelligent agents, but they were a nightmare to manage.

Late 2024 onward — browser-using agents. Then came a slew of products billed as "agents" — tools that could use a web browser the way a person does:

- Oct 2024 — Anthropic releases Claude Computer Use

- Nov 2024 — Browser-use, an open-source alternative, launches a few weeks later

- Jan 2025 — OpenAI Operator (the "Computer-Using Agent")

- July 2025 — Perplexity Comet, an AI-first browser specializing in autonomous web search and task execution

- Oct 2025 — Microsoft Copilot Vision (major update)

These tools aren't really agents on their own — and they're definitely not "agentic" in the fuller sense. But they're worth mentioning because browser use is a necessity if we're aiming for autonomous agents that can do the same work that a human does.

A quick aside on the email example: browser-using agents don't actually map cleanly to email. You could ask one to open Gmail, read messages, and draft replies — but that's the wrong abstraction. AI doesn't need to see email like a human does. It just needs access to the underlying text. Browser use is about reaching software that wasn't built for AI; for email, that's a solved problem.

November 2025 — OpenClaw. This is where we took a real leap. Austrian developer Peter Steinberger released OpenClaw — the first semi-autonomous system to genuinely catch on. OpenClaw is to 2026 what AutoGPT was to 2023: the viral, fastest-trending GitHub project of its moment, the thing that made everyone suddenly care about agents again. And like AutoGPT, it's not quite as ready for mainstream use as the headlines suggest. History repeating (maybe).

What's different about OpenClaw is the architecture. It runs locally on your machine, communicates through whatever messaging apps you already use (WhatsApp, Slack, Telegram, iMessage), and can act proactively through a heartbeat scheduler — meaning it can wake itself up and do work without being prompted. It's the closest thing we have to a true autonomous agent, with a lot of limitations and very real security concerns.

OpenClaw is not for the average user. And, like AutoGPT, it burns through credits. I spent full days getting it set up (securely), let it run in the background for two weeks, and watched it burn through ~$200 in credits doing effectively nothing. That's the part nobody puts in demo videos. Autonomous agents are expensive. Every screenshot, every reasoning step, every tool call burns tokens. A reasonably busy autonomous agent running full-time can rack up hundreds to thousands of dollars per month per user. Per-token costs are coming down fast, but agent behavior expands to fill whatever budget is available — cheaper agents get used more aggressively, not for the same workload at a lower price.

If you're technical and have the time, you can absolutely set up OpenClaw to be useful and secure. But for the average person, it's a long way from being user-friendly.

For email, OpenClaw can do the things you'd actually want from a digital assistant: read, write, draft, send. It can act like a person in many ways — long-term memory, business context, persistent identity across sessions. It's the first tool that genuinely feels like a digital coworker. It's also, today, a $200/month digital coworker that frequently needs hand-holding.

Jan-Feb 2026 — Claude Cowork and task-based automation. Cowork came after OpenClaw, but it's important for several reasons: it doesn't require massive setup, it's relatively simple, it's from a trusted company, and it can often do the same tasks you might have asked OpenClaw to do — watch incoming emails, draft and send replies, flag priority items — without the security risks.*

To tie it back to email: you can ask Cowork to aggregate newsletters into a daily digest, draft and send replies, proactively send emails, delete spam, and so on. It runs on a schedule that you define. It's partially proactive — you can tell it to run every two hours to do X — but it doesn't have the same heartbeat functionality as OpenClaw. For most knowledge workers and most businesses, this is where the productivity gains are actually happening today. If you're trying to figure out where to start with agent-like work, start here.

*Cowork still has read/write access to files on your machine, so the risk profile isn't zero — it's just smaller.

March 2026 — NemoClaw. NVIDIA released NemoClaw, a wrapper around OpenClaw that adds enterprise-grade controls: sandboxing, policy guardrails, and the ability to run open models locally for privacy. That's the direction this is going — making autonomous agents safer and more controllable. But we're early. Very early.

NemoClaw also brings up the real issue with autonomous agents — the issue that has nothing to do with the technology itself.

Governance, Liability, and the Question Nobody's Asking

Here's the thing about autonomous agents that nobody likes to talk about: the technology is the easy part. We're going to solve the technical limitations. The cost will come down. The reliability will go up. The setup will get easier. None of that is in serious doubt.

What's actually going to slow this down — what's already slowing it down — is everything around the technology. How do you give an agent access to your business systems? Who is it acting on behalf of? What happens when it gets something wrong? Who's responsible? These aren't sci-fi questions. They're the questions every business is going to have to answer before they can deploy this stuff at scale.

Let me walk through the two big ones: governance and liability.

In terms of governance and control, we actually have a model already in place — the one we use for humans. The most effective autonomous agents will need their own email address, their own phone number, their own login credentials, their own identity. Not because they need a personal life, but because that's the only way to know what they did. If your agent acts under your credentials, every action it takes looks like it came from you. If it has its own identity, you have an audit trail. You can revoke access. You can investigate. You can assign permissions just like you do with people.

Responsibility is harder. What happens when an agent does something wrong? Is it the human behind the agent, or the agent itself?

In February 2024, Air Canada was held legally liable by a tribunal in British Columbia for misleading information given by its customer service chatbot. A grieving customer asked about bereavement fares. The chatbot made up a policy that didn't exist. The customer relied on it, paid full price, and sued. Air Canada argued — with a straight face — that the chatbot was "a separate legal entity responsible for its own actions." The tribunal was unimpressed. Air Canada paid.

That was a chatbot. Just answering questions. Now think about the same liability question when an agent is acting — sending emails, making purchases, scheduling appointments, transferring money. When an agent makes a mistake that costs your company money or exposes you to legal risk, who's responsible? The vendor? The platform? The model provider? You, for deploying it?

Right now, the legal answer is: you. The company that deploys the agent owns the consequences. That's the precedent, and it's going to hold across most jurisdictions until specific AI agent liability frameworks emerge.

Which means before any responsible business deploys an autonomous (or semi-autonomous) agent, they need to answer questions almost nobody is asking yet:

- What can this agent do that I'm prepared to be liable for?

- What's the audit trail?

- How do we know it didn't go off the rails between checks?

- How do we roll back actions it took that we didn't want?

- Who owns the identity it's acting under?

These aren't sexy questions. They're not in the demo videos. But they're the questions that determine whether agentic AI gets deployed broadly in regulated industries — finance, healthcare, legal, government — or whether it stays a power-user toy for the next five years.

Fully Autonomous, Proactive Agents (the future, mostly)

This is where most of the agent hype is pointing — but isn't actually delivering yet.

You've maybe seen some names: Lindy, specialized agents acting as teammates. Devin, an autonomous coder. Viktor, a personal AI Slack-based assistant. Salesforce's Agentforce and ServiceNow's Now Assist, enterprise CRM plays. Microsoft Copilot Studio, Google Vertex AI Agents, OpenAI's Codex Agents (just released). Every major platform has an agent story, and a thousand startups have a wedge product.

A lot of these are real. Some are quite good for narrow use cases. But the marketing is almost universally further out than the product. "Build an AI agent in minutes" is the most common version of this lie. You can build something in minutes. What you can't build in minutes is something that:

- Reliably handles real work without going off the rails

- Integrates with all your systems (most of which weren't designed for AI)

- Can reliably browse websites like a person

- Has appropriate guardrails for your industry's compliance requirements

- Won't hallucinate something that gets you sued

- Costs less than the human it's supposedly replacing

- Can be audited, monitored, and rolled back when it fails

That last bundle is what makes enterprise agent deployment an uphill battle. SOC 2, HIPAA, SOX — none of these compliance frameworks were written with autonomous agents in mind. Anyone telling a Fortune 500 to "just deploy agents" hasn't sat through a security review for one. The technology is real. The organizational readiness is mostly not.

Agent DNA: What Makes an Agent Tick

Okay, let's set the governance stuff aside and look at what's actually happening under the hood. Because the real productivity flip — once we figure out governance — is autonomy. Building proactive agents instead of reactive ones. Systems that go out and do work with minimal or no human input. For that to work, you need to understand what an agent is actually made of. And, perhaps unsurprisingly, it's almost as complicated as a human.

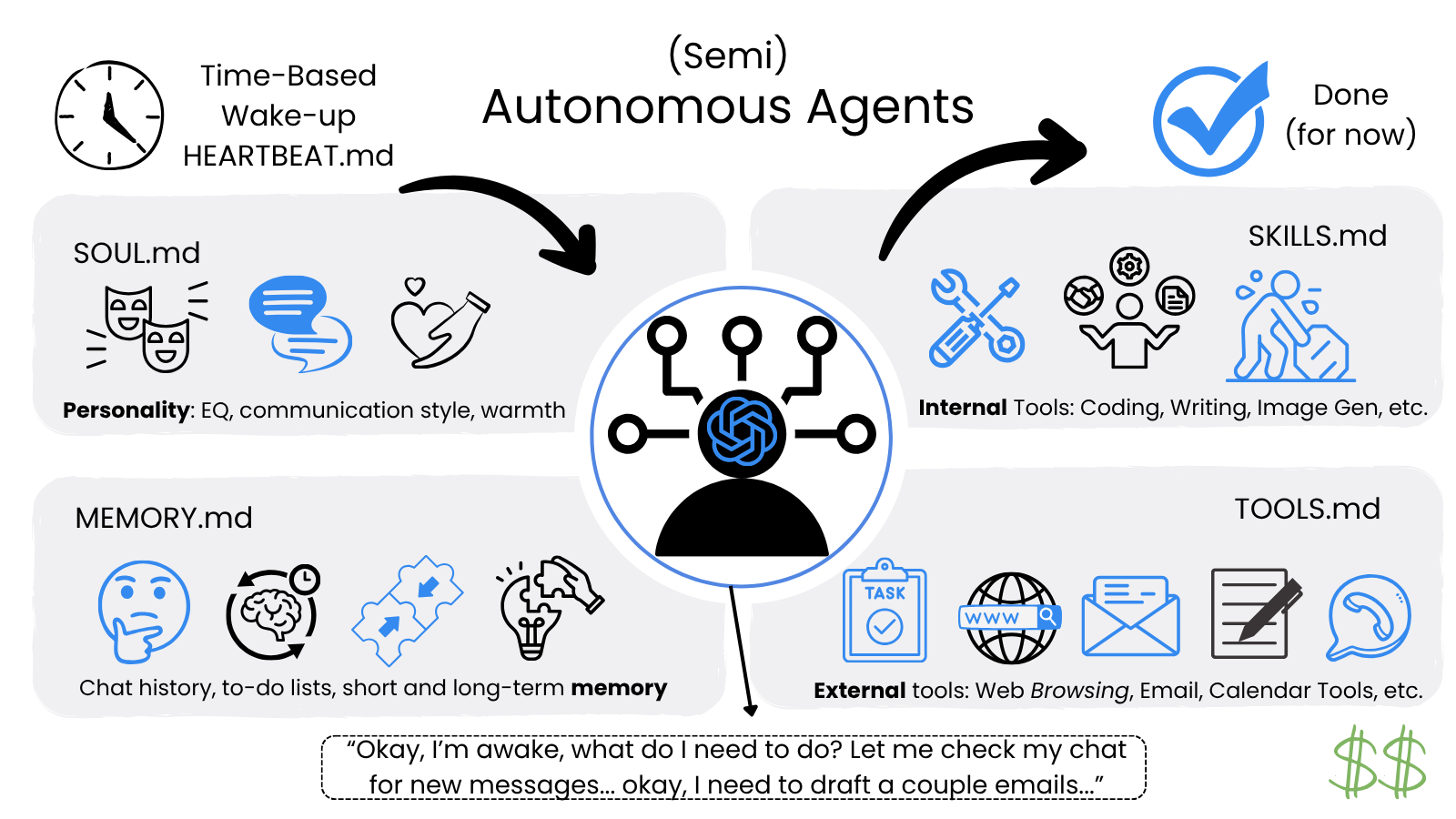

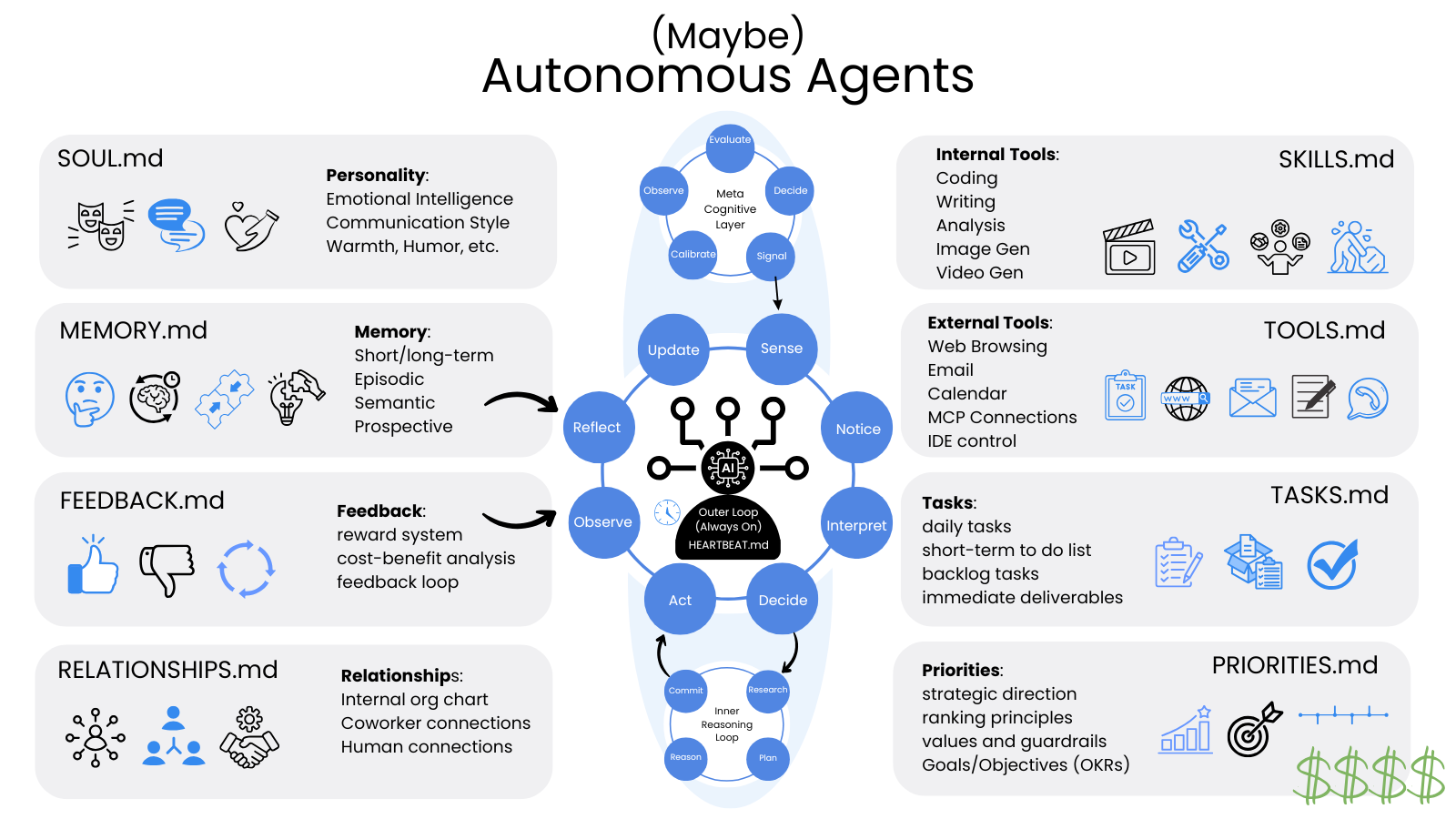

Looking at OpenClaw — the closest thing we have to autonomy — it made waves because it nailed six things:

The 6 core "primitives" of OpenClaw

- Long-term memory (MEMORY.md). Unlike standard bots that "forget" once a chat ends, OpenClaw writes daily logs and curated facts to disk. It remembers your preferences and past decisions across weeks or months.

- Personality and principles (SOUL.md). This file defines the agent's "vibe," its core values, and its non-negotiables. It's what keeps the agent behaving consistently — for example, being "curt and challenging" or "protective of your time."

- Machine-level access (TOOLS.md). OpenClaw runs locally, with direct access to your shell, file system, and browser. It can execute terminal commands, edit local files, and navigate websites like a human.

- Proactive autonomy (HEARTBEAT.md). This is perhaps its most unique feature. Every 30 minutes, the agent "wakes up" and checks a to-do list. It can proactively message you with an email summary or finish a task while you sleep — without needing a prompt.

- Multi-channel presence (the gateway). It acts as a hub connecting one brain to all your messaging apps. You can start a task in Slack and check on it from WhatsApp; the agent maintains a single persistent session across all of them.

- Self-improving capability (SKILLS.md). OpenClaw can write its own code to create new "skills." If it encounters a task it can't do, it can browse documentation, write a script to handle it, and save that script to its

skills/folder for future use.

This is a great start, but it's just the beginning of agent architecture, and there's plenty of room for improvement.

The 30-minute heartbeat becomes a continuous cognitive loop — observe, sense, interpret, decide, act, reflect, update, repeat. Memory splits into layers that mirror how human memory actually works: short-term scratch space, long-term persistent storage, episodic (what happened last time), semantic (general knowledge), prospective (things to do in the future), and meta-memory (knowing what you know and don't).

Personality moves beyond "vibe" to include communication style, risk tolerance, EQ, warmth, a sense of humor — think TARS in Interstellar. The single SOUL.md splits into a richer self: SELF.md (strengths and weaknesses), RELATIONSHIPS.md (its place among other agents and people), PRIORITIES.md (what matters when goals conflict). Tools and skills keep expanding — internal capabilities, external integrations, and a growing library of self-written skills.

Each of these on their own is a small upgrade. Stack them together and you start to get something that doesn't just execute tasks, but works the way a person works — remembering, planning, adapting, improving.

None of this is fully built today; OpenClaw nailed six primitives, and the rest is conjecture. But the trajectory is clear, and every one of these capabilities already exists somewhere — in research papers, prototypes, someone's GitHub repo. Stitching them together into something reliable, affordable, and safe is the work of the next few years.

So Where Are We, Really?

Putting it all together: in 2026, we're at the early stage of the agentic era. We have:

- Genuinely useful task-based agents that run on a schedule (Claude Cowork-class tools)

- Powerful reactive agents that use tools when prompted (mainstream chat AIs with MCP, browsing, code execution)

- Experimental autonomous agents that mostly work for narrow, well-supervised use cases

- A flood of platforms promising more than they deliver

- Real but unresolved questions about cost, governance, and liability

- A broad consensus that this will be a much bigger deal in 2-3 years than it is today

That last point is the important one. The headlines suggesting that the average professional will be "managing a team of agents" by next quarter aren't wrong about the destination — they're wrong about the timing.

I think we get to a world where most knowledge workers genuinely do have a small team of AI agents handling routine work. But it's going to take 2-3 more years, not 2-3 more quarters. And the bottleneck isn't the technology. The bottleneck is everything around the technology: governance, identity, cost models, audit trails, regulatory acceptance, organizational change management — and the simple fact that most people don't yet know what to delegate.

That last one is worth expanding on, because it's important for everyone. The question I keep asking clients is: if you had a smart, tireless personal assistant who can do your busywork, what would you actually have them do? Most people can't answer that without thinking hard about it. Turns out delegation is a skill, and most knowledge workers have never had to practice it.

What's Coming Next

If I had to bet on the next 12-24 months in agentic AI, here's my forecast:

Cost will drop, but capability will absorb the savings. Cheaper agents will be used more aggressively, not used for the same workload at lower cost. Total spend will rise, not fall.

Specialized agents will outperform general ones for real work. A customer support agent trained on your specific product and policies will beat a general-purpose agent every time. The winners in the next wave will be domain-specific.

Identity and audit will become the bottleneck. Every enterprise agent deployment will require answering "who is this agent acting as, and how do we prove what it did?" Tools that solve this will become very valuable.

The "manage your team of agents" narrative will arrive — but slowly. It'll start with power users and developers, expand to early-adopter knowledge workers, and reach the mainstream professional sometime in 2027-2028. Not 2026. Not Q3.

Regulation will hit before maturity. Some jurisdiction will pass an AI agent liability law in the next 18 months that reshapes how these things get deployed. Maybe the EU. Maybe California. It won't be perfect, but it'll force the conversation.

The organizations that win will be the ones that build the muscle now. Not by deploying autonomous agents at scale — that's premature — but by getting their teams comfortable with task-based agents, building the workflow automation backbone, and learning to delegate to AI on the rungs of the ladder where it actually works today.

What This Means for Your Job

I'll close where most people start: with the question of what all this means for the average professional's career.

Short answer: agentic AI is real, it's coming, and it's going to reshape how knowledge work happens — but the timeline is longer than the breathless coverage suggests, and the kind of disruption is more interesting than "AI takes your job."

The professionals who thrive in this transition will be the ones who learn to direct the work, not just do it. The ones who build comfort with AI tools at every level. The ones who develop the rare and underappreciated skill of knowing what to delegate, and how to specify the delegation clearly enough that an agent (or a person) can actually do it.

That's a much bigger topic, and it's the one I want to dig into next. Because the question on most people's minds isn't really what is agentic AI? — it's will AI take my job? And the honest answer to that is more complicated than most of the takes you're reading. There are real reasons for optimism, and real risks people aren't paying enough attention to.

That's the next post. Stay tuned.